AI actuality take a look at: New NPUs don’t topic as great as you’d mediate

You seemingly already hold an AI PC.

Within the past few months, Intel and PC makers own beat the drum of the AI PC loudly and in dwell efficiency with AMD, Intel, and Qualcomm. It’s no secret that “AI” is the new “metaverse” — you already know, that component that everybody became as soon as speaking up a pair of years within the past — and executives and traders alike desire to make utilize of AI to spice up sales and stock prices.

And it’s ethical that AI does rely upon the NPUs show in chips love Intel’s Core Ultra, the designate that Intel is positioning as synonymous with on-chip AI. The same goes for AMD’s Ryzen 8000 series — which beat Intel to the desktop with an NPU — as neatly as Qualcomm’s Snapdragon X Elite.

It’s correct that the built-in NPUs learned all over the Core Ultra licensed now (Meteor Lake, however with Lunar Lake ready within the wings) enjoy no longer play the outsized purpose in AI computation that they’re being positioned as. As a replacement, the more worn roles for computational horsepower (the CPU and particularly the GPU) make contributions more to the duty.

It’s necessary to show several issues: First, in point of fact benchmarking AI efficiency is one thing absolutely everybody is wrestling with. “AI” is constituted of quite divergent projects: image abilities, akin to utilizing Proper Diffusion; ideal-language devices (LLMs), the chatbots popularized by the (cloud-basically basically based) Microsoft Copilot and Google Bard; and a host of utility-specific enhancements, akin to AI enhancements in Adobe Premiere and Lightroom. Combine the a lot of variables in LLMs alone (frameworks, devices, and quantization, all of which have an effect on how chatbots will urge on a PC) and the tempo at which these variables fluctuate, and it’s very sophisticated to pick out out out a winner — and for more than a moment in time.

The following point, although, is one which we can assert with some sure bet: That benchmarking works handiest in case you retain away with as many variables as seemingly. And that’s what we can enjoy with one little piece of the puzzle: How great does the CPU, GPU, and NPU make contributions to AI calculations conducted by Intel’s Core Ultra chip, Meteor Lake.

Mark Hachman / IDG

The NPU isn’t the engine of on-chip AI licensed now. The GPU is

We’re no longer searching for to construct how neatly Meteor Lake performs in AI. But what we can enjoy is form a actuality take a look at on how great the NPU matters in AI.

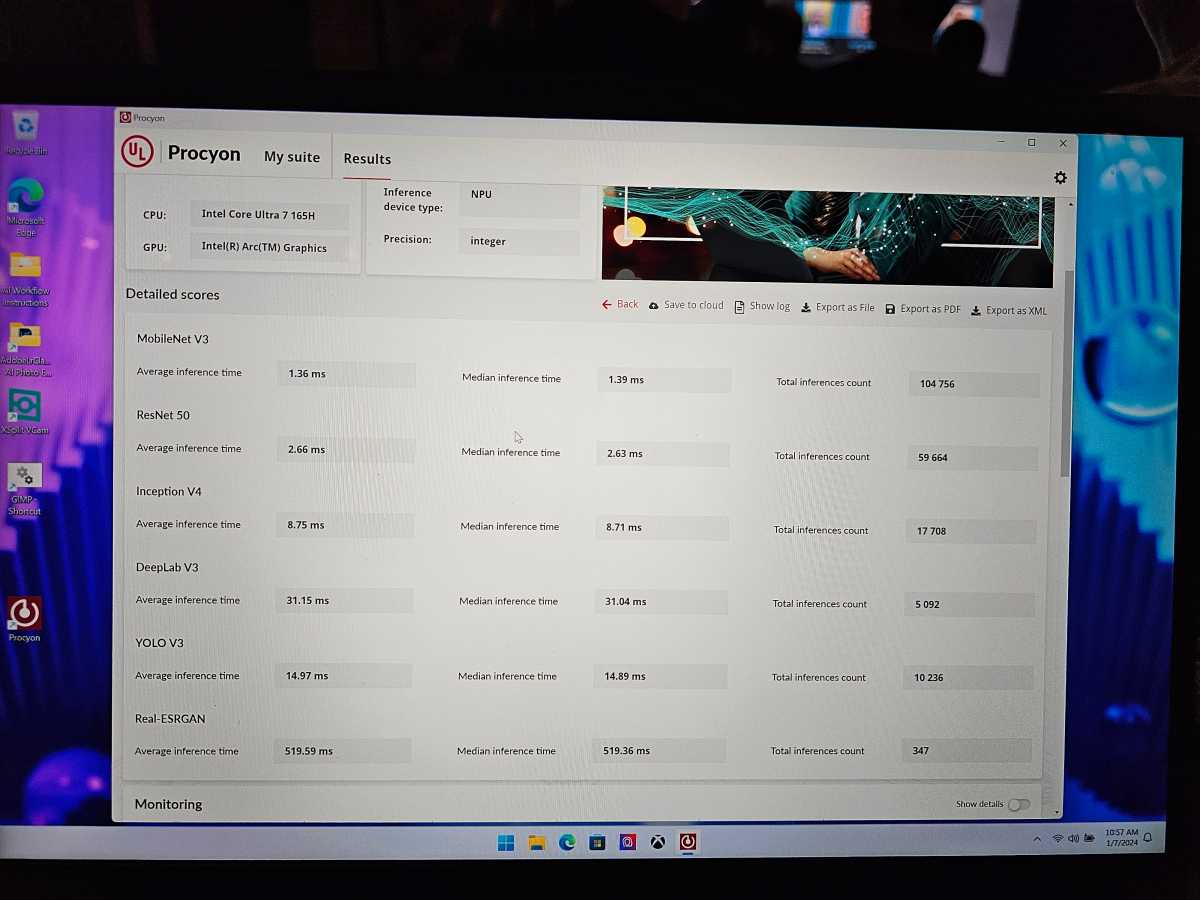

The specific take a look at we’re utilizing is UL’s Procyon AI inferencing benchmark, which calculates how effectively a processor runs when coping with varied ideal language devices. Specifically, it permits the tester to interrupt it down, evaluating the CPU, GPU, and NPU.

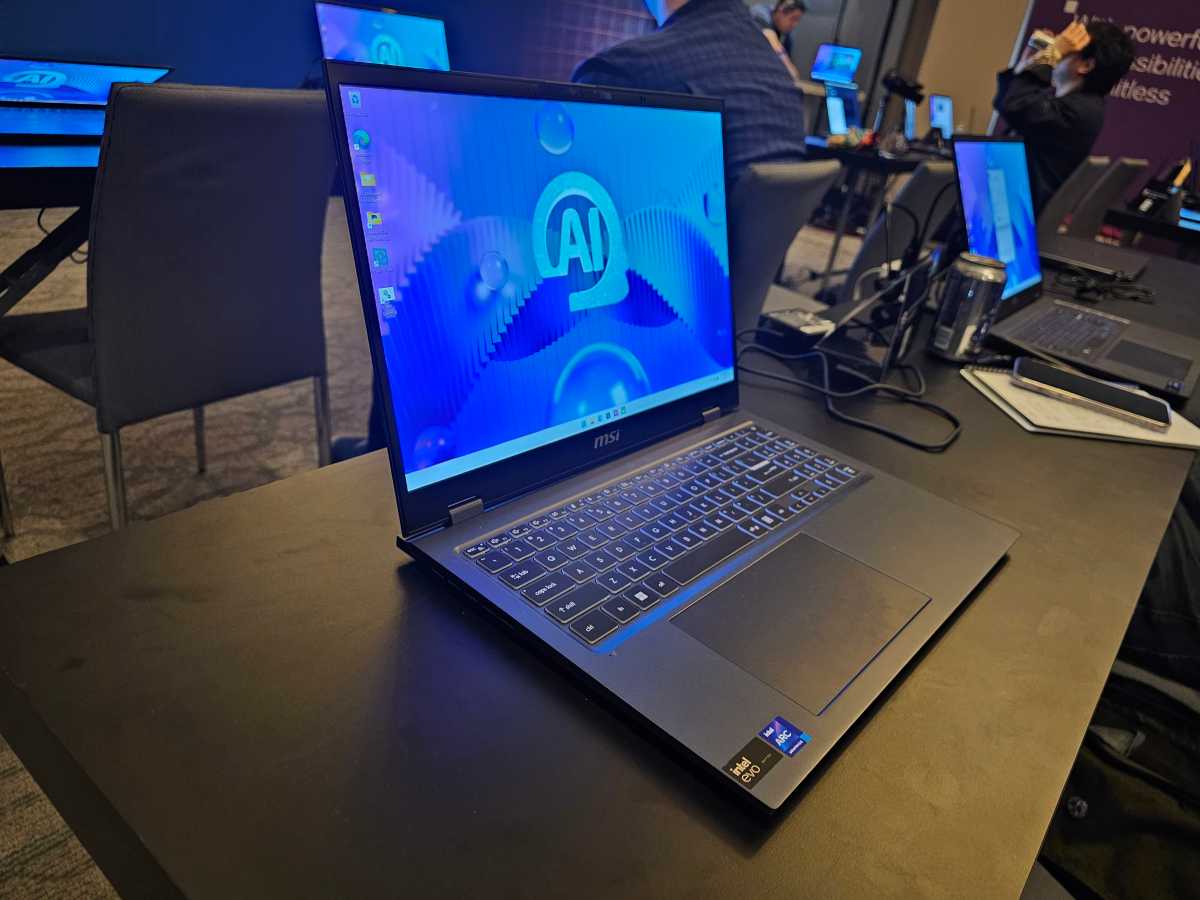

On this case, we tested the Core Ultra 7 165H inside of an MSI notebook computer, supplied for locating out all over an Intel benchmarking day at CES 2024. (A lot of my time became as soon as spent interviewing Dan Rogers of Intel, however I became as soon as in a hiss to obtain some assessments in.) Procyon runs the LLMs on high of the processor and calculates a get, basically basically based upon efficiency, latency, and many others.

With out ado, listed below are the numbers:

- Procyon (OpenVINO) NPU: 356

- Procyon (OpenVINO) GPU: 552

- Procyon (OpenVINO): CPU: 196

The Procyon assessments proved several functions: First, the NPU does scheme a disagreement; when put next with the efficiency and effectivity cores in other areas within the CPU, the NPU outperforms it by 82 p.c, all by itself. But the GPU’s AI efficiency is 182 p.c of the CPU, and outperforms the NPU by 55 p.c.

Mark Hachman / IDG

Place one opposite direction: In case you worth AI, do away with a ideal, beefy graphics card or GPU first.

But the 2d point is less obtrusive: Yes, you can urge AI purposes on a CPU or GPU, with none need for a dedicated AI good judgment block. The general Procyon assessments issue is that some blocks are more handy than others.

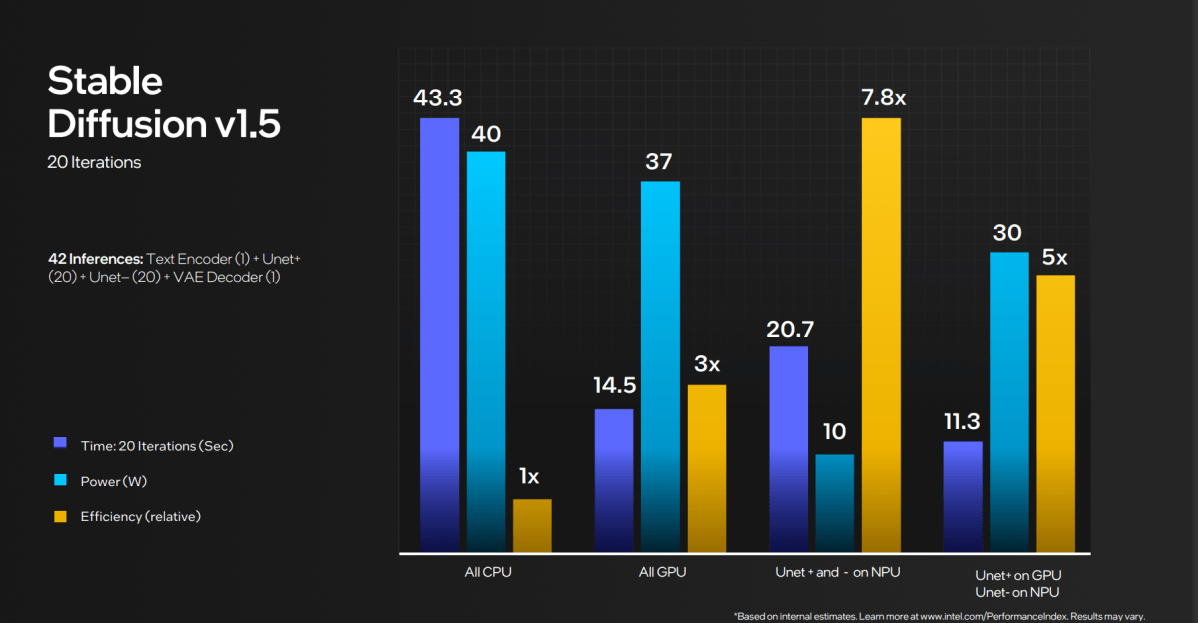

Intel’s claim is that the NPU is more efficient. Within the actual world, “effectivity” is chipmaker code for “long battery existence.” At the equal time, Intel has tried to emphasise that the CPU, GPU, and NPU can work collectively.

Intel

On this case, the NPU’s effectivity equates to AI purposes that operate over time, and per chance on battery. And basically the most convenient example of that’s a lengthy Microsoft Groups name from the depths of a resort room or conference center (correct love CES!) where AI is being extinct to filter noise and background roar.

Usually, AI artwork purposes love Proper Diffusion own launched first utilizing the flexibility of your GPU, alongside a ton of available VRAM, to generate native AI artwork. But over time AI purposes own developed to urge on less highly effective configurations, alongside with largely on the CPU. This is a familiar metaphor; you’re no longer going to urge a graphics-intensive sport love Crysis neatly on built-in hardware, however it would urge — correct very, very slowly. AI LLMs / chatbots will enjoy the equal, “pondering” for a truly very long time about their responses and then “typing” them out very slowly. LLMs that can urge on a GPU will form better, and cloud-basically basically based alternate choices will seemingly be great faster.

Nonetheless, AI will evolve

It’s spirited, too, that (as of this writing) UL’s Procyon app acknowledges the CPU and the GPU within the AMD Ryzen AI-powered Ryzen 7040, however no longer the NPU. We’re within the very early days of AI, when no longer even the predominant capabilities of the chips themselves are identified by the needs which are designed to make utilize of them. This correct complicates checking out even extra.

The point is, however, that you simply don’t need an NPU to urge AI to your PC, especially whilst you occur to already own a gaming notebook computer or desktop. NPUs from AMD, Intel, and Qualcomm will seemingly be nice to own, however they’re no longer should always-haves, both.

Mark Hachman / IDG

It won’t consistently be this vogue, although. Intel’s promising that the NPU within the upcoming Lunar Lake chip due on the end of this year would possibly per chance own three times the NPU efficiency. It’s no longer announcing anything about the CPU or the GPU efficiency. It’s very seemingly that, over time, the NPU’s efficiency in varied PC chips will develop in relate that their AI efficiency will change into hugely disproportionate when put next with the opposite functions of the chip. And if no longer, a slew of AI accelerator chip startups own plans to change into the 3Dfx’s of the AI world.

For now, although, we’re right here to catch a deep breath as 2024 begins. New AI PCs topic, as enjoy the new NPUs. But patrons doubtlessly won’t care as great as chipmakers that AI is running on their PC, versus the cloud, no topic how loud the hype is. And for those that enjoy care, the NPU is correct one piece of the general solution.