Nightshade, a free instrument to poison AI recordsdata scraping, is now on hand to are attempting

Serving the tech enthusiast community for over 25 years.

TechSpot draw tech diagnosis and advice you can belief. Learn our ethics observation.

A sizzling potato: Generative AI devices will luxuriate in to be educated with an inordinate quantity of source discipline material sooner than they are ready for prime time, and which would possibly possibly additionally just also be a scenario for inventive mavens that manufacture no longer favor devices learning on their suppose material. Attributable to a brand recent instrument instrument, visible artists now luxuriate in one other methodology to negate “no” to the AI-feeding machine.

Nightshade develop to be firstly launched in October 2023 as a instrument to divulge colossal generative AI devices. Created by researchers at the College of Chicago, Nightshade is an “offensive” instrument that can defend artists’ and creators’ work by “poisoning” an image and making it rotten for AI practicing. This system is now on hand for any individual to are attempting, on the other hand it likely is no longer going to be ample to expose the up to date AI industrial upside down.

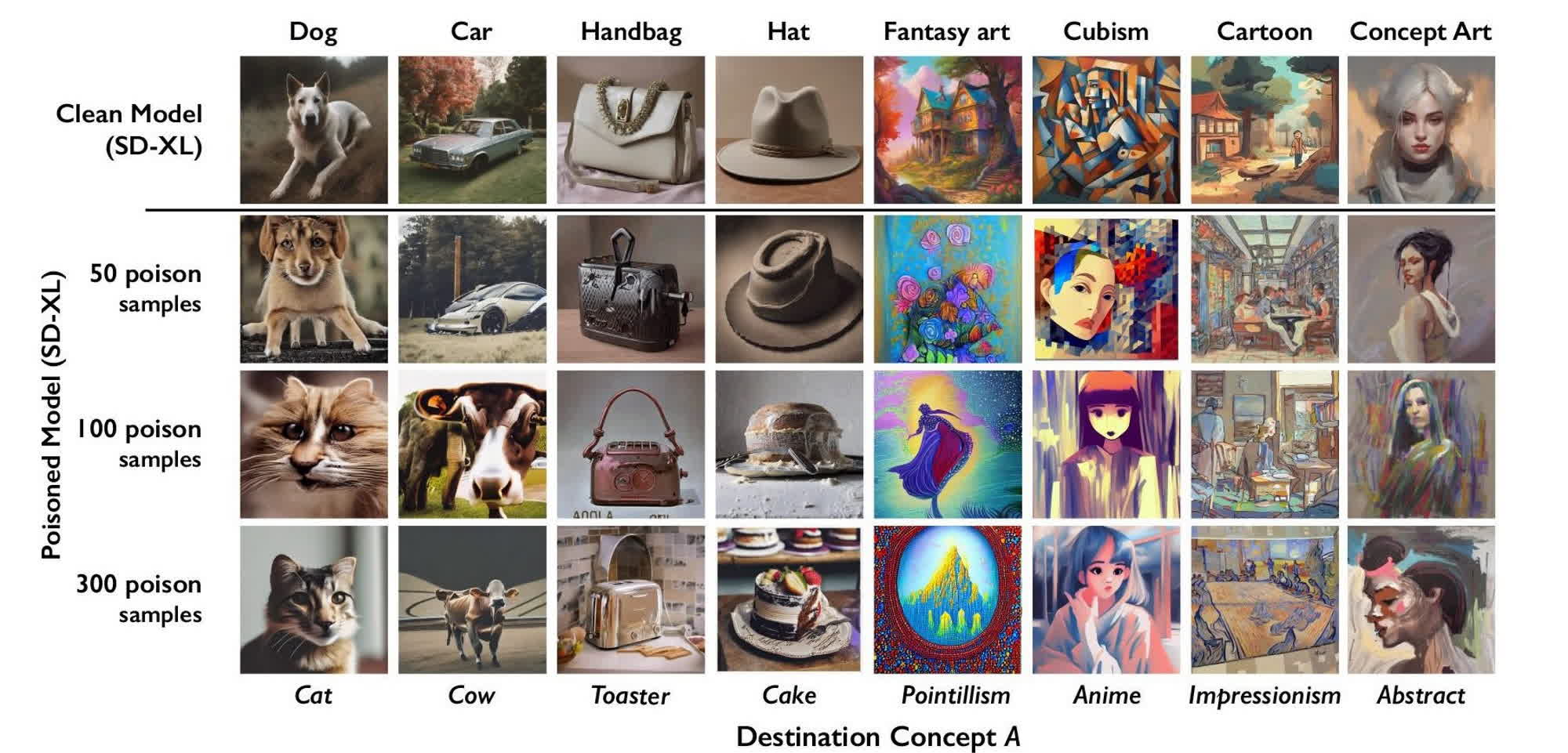

Photos processed with Nightshade can get AI devices label unpredictable behavior, the researchers acknowledged, equivalent to producing an image of a floating purse for a suggested that asked for an image of a cow flying in effect. Nightshade can deter model trainers and AI companies that manufacture no longer appreciate copyright, decide-out lists, and build-no longer-fetch 22 situation directives in robots.txt.

Nightshade is computed as a “multi-fair optimization that minimizes considered changes to the brand new image,” the researchers acknowledged. Whereas contributors explore a shaded image that is basically unchanged from the brand new art work, an AI model would teach a truly a quantity of composition. AI devices educated on Nightshade-poisoned photos would generate an image similar to a “dog” from a suggested soliciting for a cat. Unpredictable results would possibly possibly additionally practicing on contaminated photos much less worthwhile, and would possibly possibly additionally suggested AI companies to negate completely on free or licensed suppose material.

A shaded image of a cow in a inexperienced discipline would possibly possibly additionally also be transformed into what AI algorithms will define as a colossal leather-essentially based fully purse lying within the grass, the researchers acknowledged. If artists provide ample shaded photos of a cow to the algorithm, an AI model will birth producing photos of leather-essentially based fully handles, facet pockets with a zipper, and a quantity of unrelated compositions for cow-focused prompts.

Photos processed by Nightshade are remarkable ample to outlive previous image manipulation, including cropping, compressing, noising, or pixel smoothing. Nightshade can work along with Glaze, one other instrument designed by the College of Chicago researchers, to extra disrupt suppose material abuse.

Glaze affords a “defensive” methodology to suppose material poisoning and would possibly possibly additionally also be previous by particular person artists to defend their creations towards “model mimicry attacks,” the researchers defined. Conversely, Nightshade is an offensive instrument that works simplest if a neighborhood of artists gang up to are attempting and disrupt an AI model identified for scraping their photos with out consent.

The Nightshade team has launched the first public model of the namesake AI poisoning application. The researchers are amassed attempting out how their two tools luxuriate in interplay when processing the the same image, they assuredly’ll finally combine the 2 approaches by turning Nightshade into an add-on for Webglaze.