Idea: Is anybody going to manufacture cash in AI inference?

TechSpot is celebrating its 25th anniversary. TechSpot formula tech diagnosis and recommendation you can belief.

A gigantic subject in semiconductors this present day is the standing that the true market substitute for AI silicon goes to be the market for AI inference. We bear this is gleaming, nonetheless we’re beginning to shock if anybody goes to manufacture any cash from this.

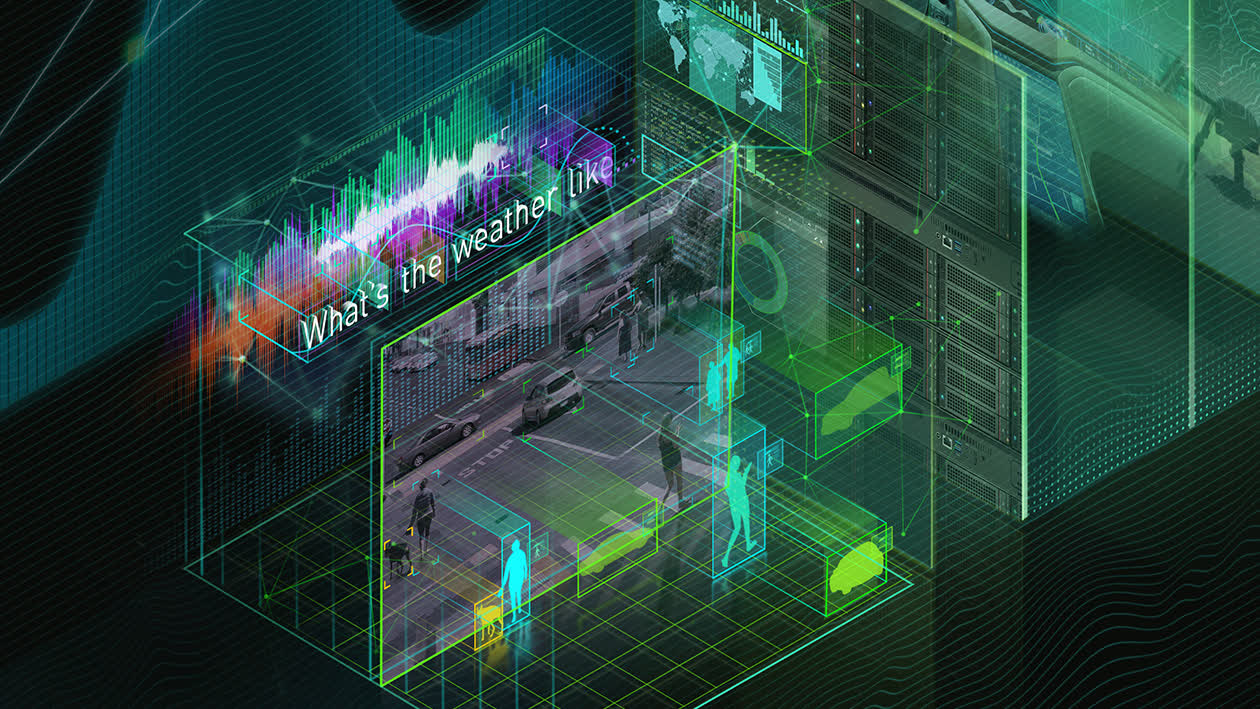

The market for AI inference is essential for two reasons. First, Nvidia seems to enjoy a lock on AI training. Staunch, AMD and Intel enjoy choices in this home, nonetheless let’s classify these as “aspirational” for now. At the present, here’s Nvidia’s market. 2d, the market for AI inference is probably to be mighty elevated than the educational market. Intel’s CEO Pat Gelsinger has a staunch analogy for this – climate devices. Handiest a few entities need to salvage climate prediction devices (NASA, NOAA, and many others), nonetheless each person wants to take a look at the climate.

Editor’s Present:

Guest author Jonathan Goldberg is the founder of D2D Advisory, a multi-functional consulting company. Jonathan has developed progress recommendations and alliances for firms within the mobile, networking, gaming, and draw industries.

The identical holds for AI, the utility of devices shall be derived by the ability of pause-users to manufacture utilize of them. As a result, the significance of the inference market has been a consistent theme within the complete analyst and investor events we enjoy attended not too long ago, and even Nvidia has shifted their positioning recently to talk about loads extra about inference.

Surely, there are two pieces of the inference market – cloud and edge. Cloud inference takes station within the guidelines middle and edge inference takes station on the draw. We enjoy now heard folks debate the definition of these two not too long ago, the boundaries can safe a minute blurry. But we bear the breakdown is somewhat straightforward, if the firm working the mannequin pays for the capex that is cloud inference, if the pause user pays the capex (by buying a cell phone or PC) that is edge inference.

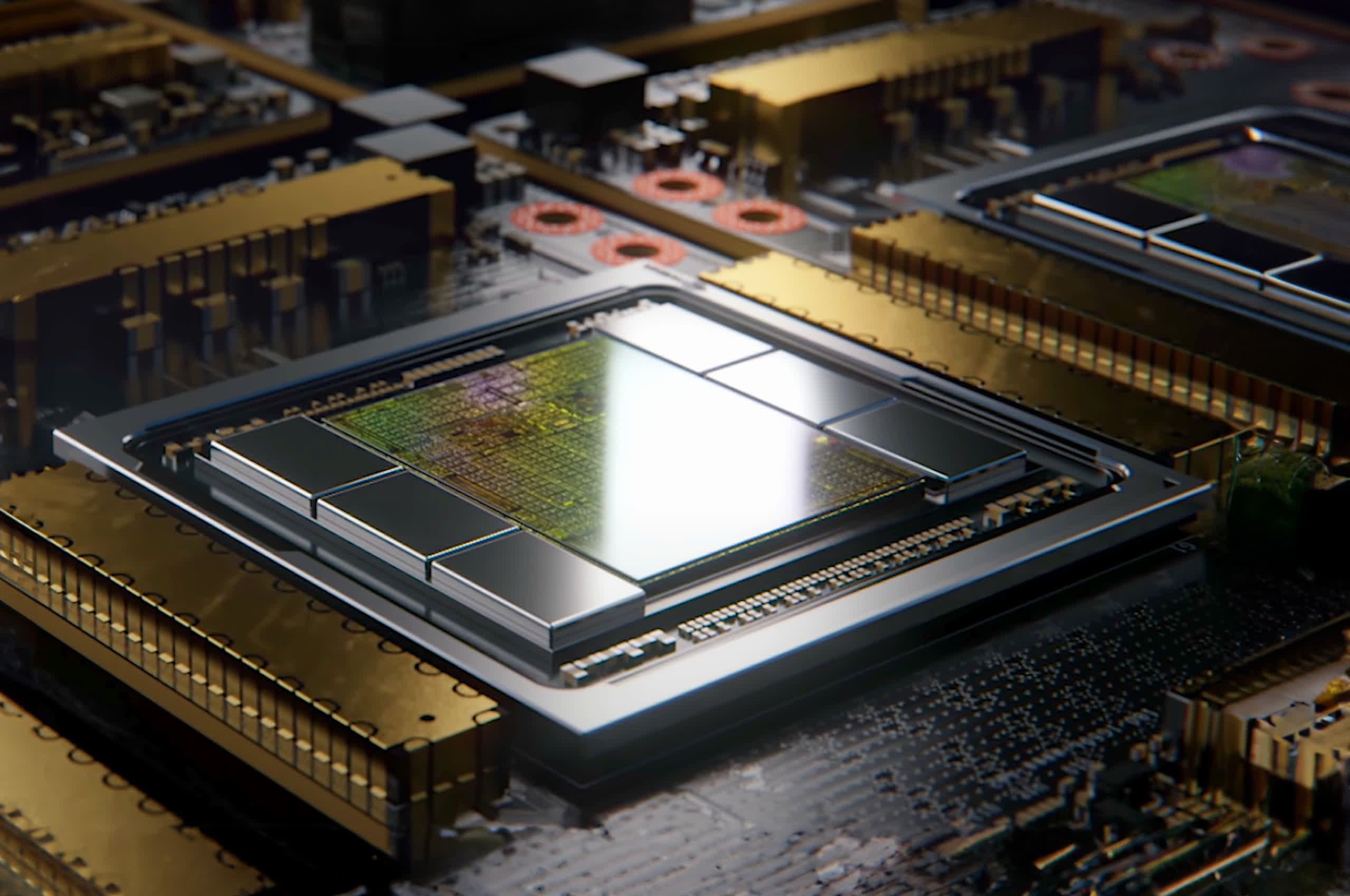

Cloud inference is probably to be the most attractive contest to eye. Nvidia has articulated a in actual fact solid case for why they’re going to transfer their dominance in training to inference. Build merely, there is heaps of overlap and Nvidia has Cuda and other draw instruments to manufacture the connection between the 2 very easy. We suspect it’s going to charm to many customers, we’re in an generation of “You assemble not safe fired for buying Nvidia”, and the firm has loads to offer here.

On the unreal hand, their large opponents are going to push very provocative for their allotment of this market. Furthermore, the hyperscalers who will probably snarl the bulk of inference silicon enjoy the ability to spoil the reliance on Nvidia whether or not by designing their very bear silicon or making paunchy utilize of the competition. We demand this to be the center of heaps of consideration in coming years.

The market for edge inference is a mighty extra inaugurate quiz. For starters, no person in actual fact is aware of how mighty AI devices will depend on the threshold. The firms which shall be working these devices (particularly the hyperscalers) would in actual fact savor for edge inference to predominate. This would well maybe tremendously decrease the amount of cash they enjoy to snarl constructing all these cloud inference recordsdata products and services. We suspect that the economics of AI can also not pencil out if here’s not that you just would possibly well maybe also deem.

In fact that we plan not know what consumers would be willing to pay because we plan not in actual fact know what AI will plan for consumers.

That being mentioned, this vision comes with a predominant caveat – will consumers in actual fact be willing to pay for AI? At present time, if we requested the frequent user how mighty they’d pay to scramble ChatGPT on their very bear computer we suspect the answer would be $0. Yes, they are willing to pay $20 a month to make utilize of ChatGPT, nonetheless would they be willing to pay extra to safe it to scramble within the neighborhood. The coolest thing about here’s not exclusively particular, per chance they would also safe answers extra fleet nonetheless ChatGPT is already somewhat mercurial when delivered from the cloud. And if consumers are not willing to pay extra for PCs or telephones with “AI capabilities” then the chip makers will not be going to be in a arena to fee premiums for silicon with these capabilities. We mentioned that Qualcomm faces this field in smartphones, nonetheless the identical applies to Intel and AMD for PCs.

We enjoy now requested each person about this and enjoy but to safe a particular answer. In fact that we plan not know what consumers would be willing to pay because we plan not in actual fact know what AI will plan for consumers. When pressed, the semis executives we spoke with all tend to default to some model of “We enjoy now considered some inconceivable demos, coming soon” or “We bear Microsoft is engaged on some inconceivable issues”. These are magnificent answers, we’re not (but) paunchy-blown AI skeptics, and we imagine there are some inconceivable initiatives within the works.

This reliance on draw begs the quiz as to how mighty rate there is for semis makers in AI. If the associated price of these AI PCs depends on draw firms (particularly Microsoft) then it’s probably to elevate that Microsoft will attach the bulk of the associated price for user AI products and services. Microsoft is extra or less an educated at this. There is a in actual fact true possibility that the final note enhance that comes from AI semis shall be that they spark a one-time draw refresh. That shall be staunch for a 365 days or two, nonetheless is far smaller than the big substitute some firms are making AI out to be.