First PC AI accelerator playing cards from MemryX, Kinara debut at CES

Since Intel doesn’t knowing a desktop CPU with AI capabilities till later this twelve months, PC makers are turning to chip startups as an different –and the future may well maybe well be within the Lenovo ThinkCentre Neo Extremely, doubtlessly with AI playing cards from MemryX and Kinara inner.

Lenovo will launch the ThinkCentre Neo Extremely in June for approximately $1,000, product manager Bryan Lin acknowledged from Lenovo’s booth at CES 2024. Whereas Lenovo’s documentation doesn’t formally consist of both AI processor, it’s seemingly. And the dinky stutter material-introduction desktop was once at CES showcasing each AI playing cards.

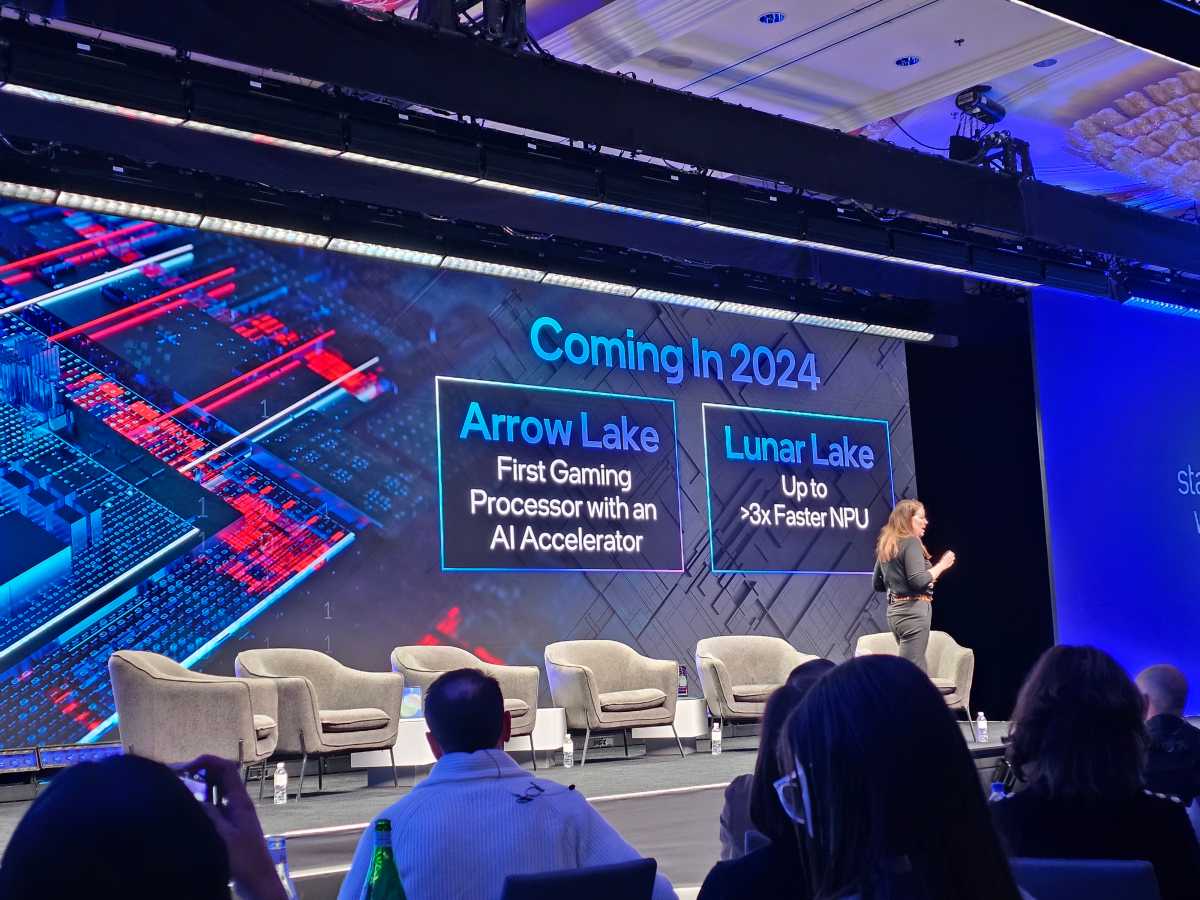

Whereas AMD, Intel and Qualcomm respect all proven cell processors with built-in AI NPUs, most spirited AMD has announced a desktop Ryzen processor with an APU inner. Intel, which holds the dominant portion within the PC processor industry, will respect to wait till the launch of Arrow Lake to discover an NPU readily out there for desktop PC makers.

In the intervening time, more PC makers are realizing that an “AI PC” can if truth be told be constructed with correct a CPU and a GPU, while NPUs present more energy-atmosphere friendly AI. Whereas you’re a desktop PC maker, with historically fewer issues about energy consumption, that may well maybe well be ample. However companies, which wish to notice AI to making cash, favor AI now — and so they stop care about minimizing energy consumption at scale. In this, finally, the alternate market may well maybe well push forward of person PCs.

Impress Hachman / IDG

“What we’re seeing now is that the discrete graphics card is simply too hungry by map of kind component and energy, thermal beget, et cetera,” Lin acknowledged. “So an NPU card drawing about 5 to 10 watts can give us a determined level of AI capabilities.”

However what about when Arrow Lake debuts?

“With Arrow Lake what I’m getting is that it’s silent very shrimp [in terms of] energy,” Lin acknowledged. “So, finally eighteen to twenty-four months from now, I feel discrete [AI accelerators] will silent be segment of it. And particularly for desktop, the assign we don’t respect the limitation of battery.”

Impress Hachman / IDG

The ThinkCentre Neo Extremely will consist of as a lot as an Intel Core i9 vPro processor of an undisclosed architecture, with as a lot as 64GB of DDR5-5200 memory. This can also consist of a creator-class Nvidia GeForce RTX 4060 GPU, as a lot as 4TB of SSD storage, with a 350W inner energy present. It’s a 3.6-liter chassis, measuring 7.67 x 7.67 x 4.21in.

Lenovo has what it is calling an AI engine, routing workloads to the assign it suits the most, Lin acknowledged.

Impress Hachman / IDG

Lin acknowledged that there are a choice of AI chip startups that the company is working with, including MemryX and Kinara, the 2 AI chip companies being proven off at the booth.

Meet MemryX, one in all the main AI accelerators

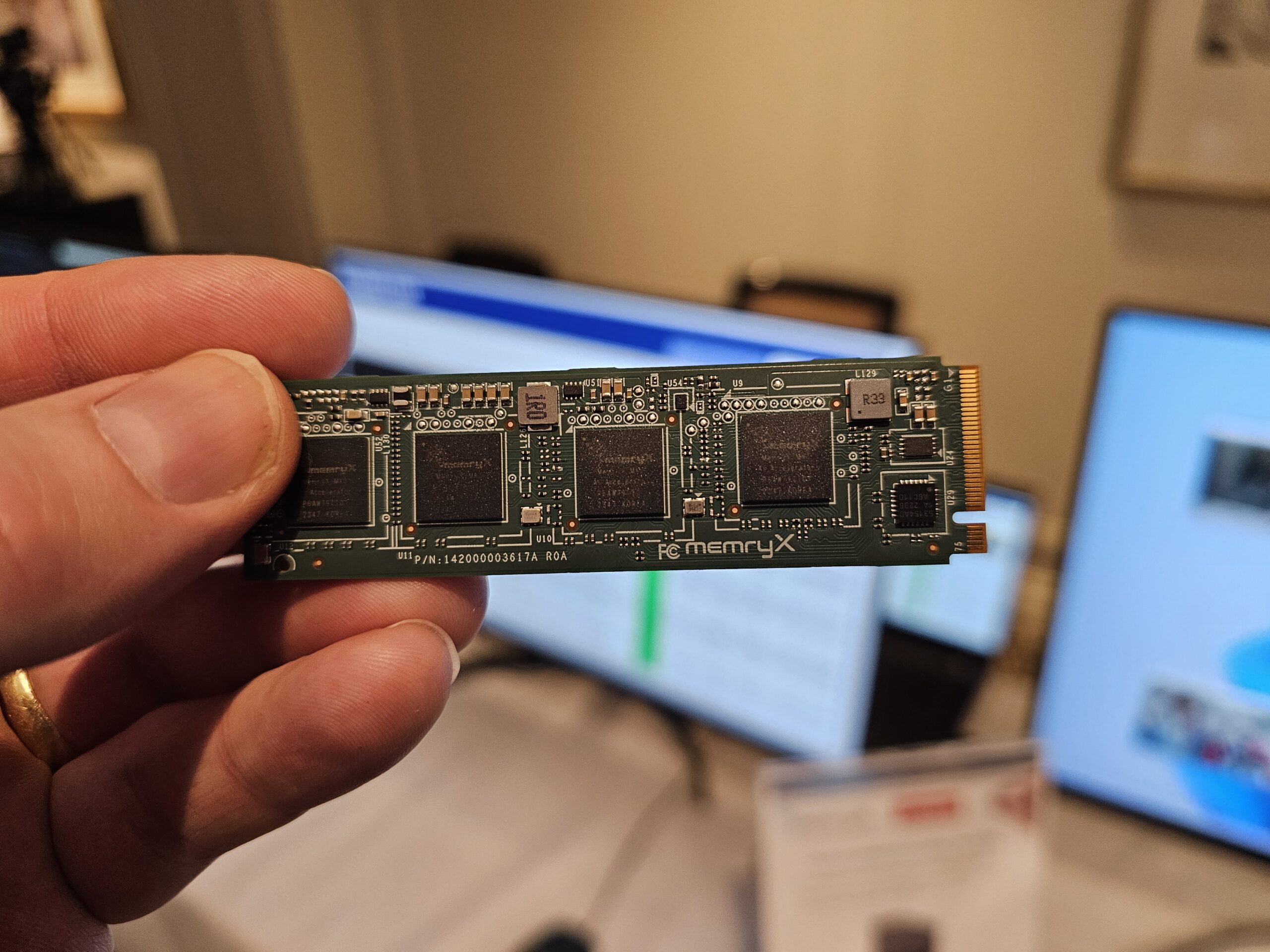

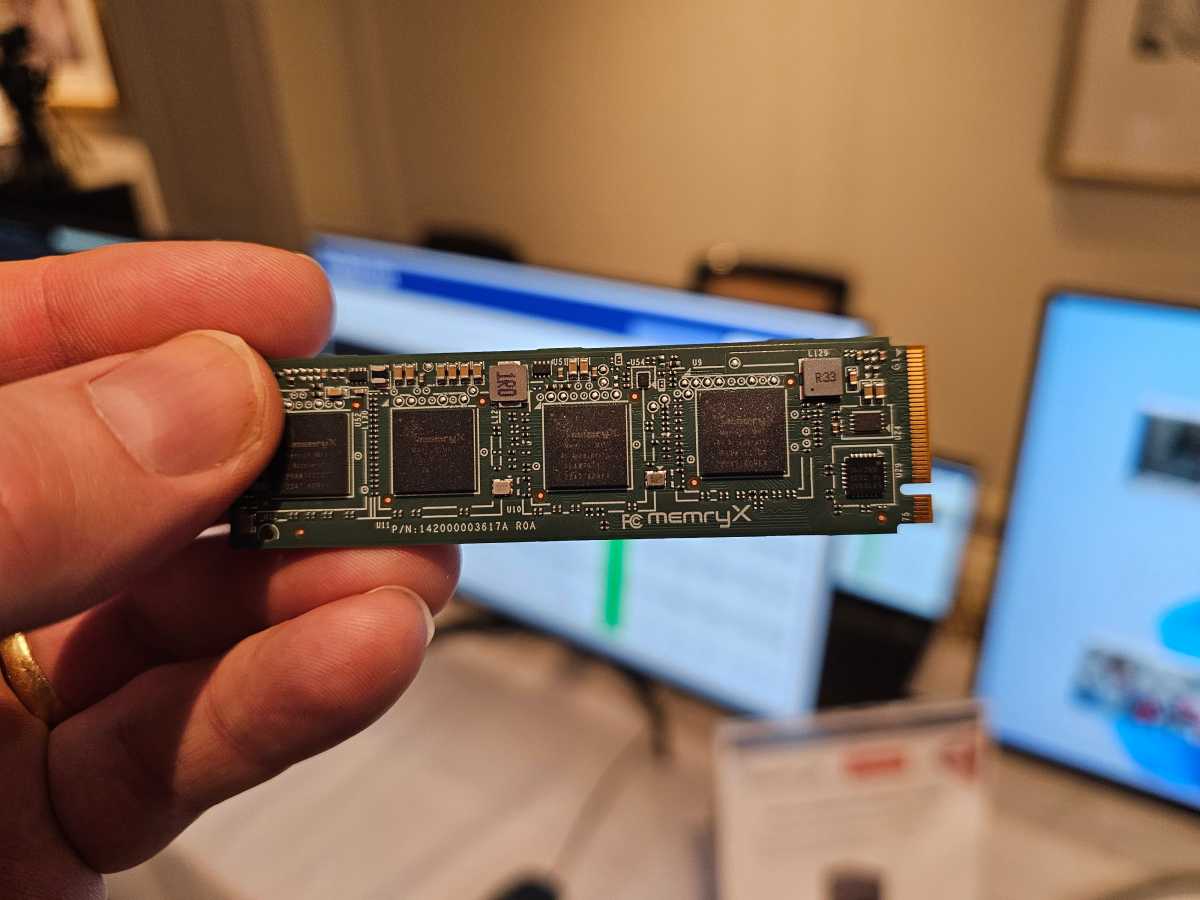

MemryX manufactures the MX3 Edge AI Accelerator. The company’s instrument pattern kit, and what Lenovo is exhibiting off contained within the ThinkCentre, is constituted of 4 MX3 chips mounted on an M.2 PCI Disclose card (Gen3, a diminutive surprisingly), although it goes to ride inner a USB 3.2 USB card as smartly.

MemryX charges each MX3 as in a position to 10 TFLOPs (trillion floating-level operations) as an different of the more gentle TOPS — that’s 40 TFLOPS per card, with 4 chips per card. That’s for the reason that MX3 defaults to 16-bit floating-level operations and eight-bit weights by default, in decision to the integer operations which can maybe well be a more overall metric, basically based on Roger Peene, the vp of product and alternate pattern for MemryX.

“When there’s a possibility to assert discrete alternate choices, all americans will assert it till Intel or AMD integrates it,” Peene acknowledged. “So all americans knows Intel’s map at the wait on of… they’ve amped up their marketing. They’re clearly no longer cheerful that Lenovo would respect shut a startup to ride AI in a PC. So that’s more or less the story.”

Impress Hachman / IDG

Every MX3 consumes 1 to 2 watts on common, Peene acknowledged. The chips pork up Linux, Android and House windows, as smartly as the TensorFlow, TensorFlow-lite, PyTorch, ONNX and Keras frameworks.

Every chip can ride a mannequin with 10 million 8-bit parameters, scaled as important. Out of the sphere, the MX3 can abolish YOLO v7 minute at 416×416, 375fps (x2) without pruning or practising, or SSDMobileNet (224×224) at 1403fps.

We haven’t had a possibility to advise to Kinara, although the company launched its Ara-2 Edge AI processor final descend. “For example of its capabilities for processing Generative AI models, Ara-2 can hit 10 seconds per image for Proper Diffusion and tens of tokens/sec for LLaMA-7B,” the company acknowledged in a press free up.

Impress Hachman / IDG

Both the MemryX and Kinara AI chips are being positioned first as AI for image recognition, with one MemryX demo exhibiting off the plan in which it may well probably maybe well peep if construction workers had donned the moral keeping instruments. Unruffled, AI shall be ancient for all forms of purposes: video games, avatars, local language models/chatbots, and more.

What’s more crucial, alternatively, is that companies treasure Nvidia, Rendition, 3Dfx, and others launched years within the past as 3D accelerators — and now, after some fell by the wayside, dominate the stutter material-introduction and gaming industry. Count on a recent wave of AI accelerator playing cards to location them.

Clarification: The MemryX MX3 is in a position to 10 TFLOPS per chip, or 40 per card.